An announcement on 13 December was made by the US Department of Energy that scientists in the US had achieved nuclear ignition—producing a net gain in energy output compared to input energy from the lasers that drove the nuclear fusion reaction. This represents the first time that scientists were able to do so.

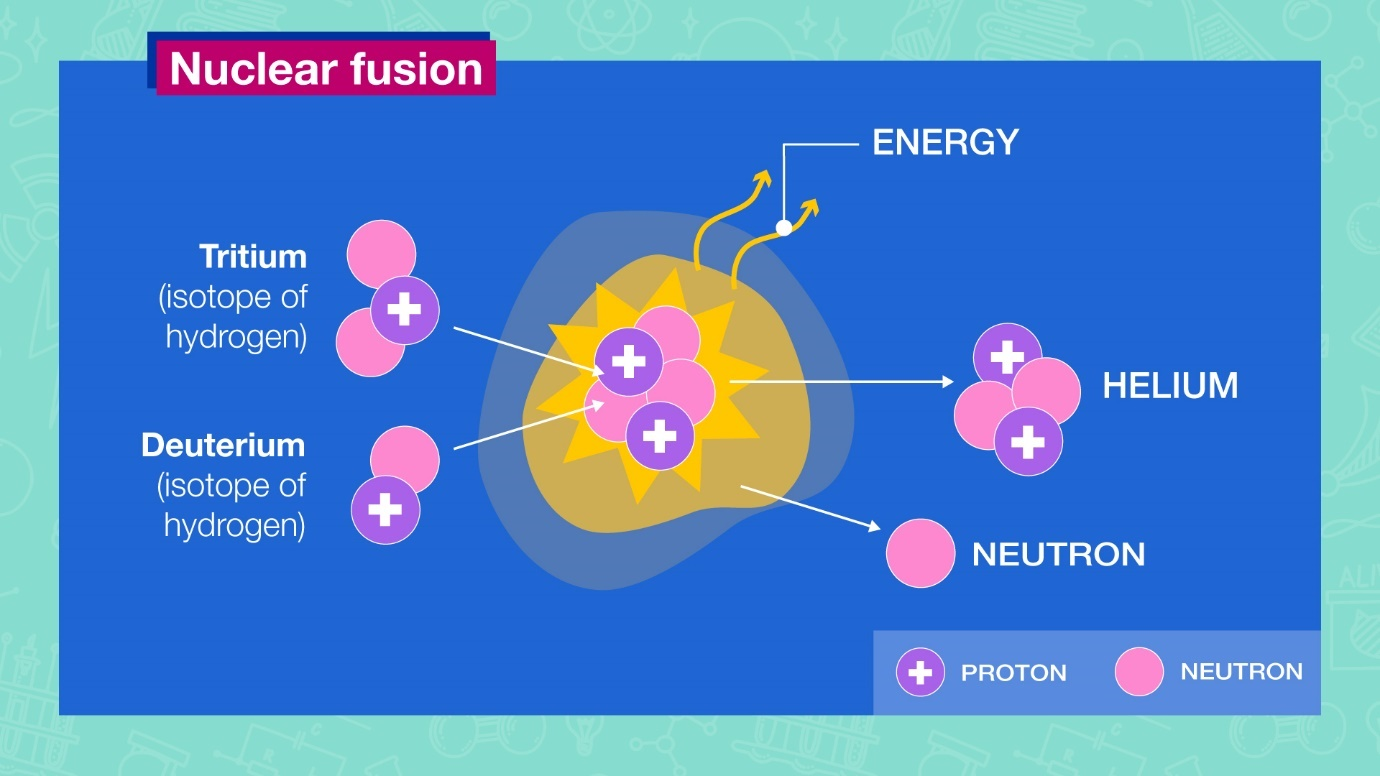

Nuclear fusion, the reaction that powers our Sun and all stars, entails smashing atoms together to form a heavier atom, during which a large amount of energy is released. The reaction has the highest energy potential of all known reactions, including about 4 times more than nuclear fission, the reaction involving the splitting of atoms that is used in conventional nuclear plants.

Following this announcement, we came across multiple posts on Twitter and other social media platforms that suggested this development had led to ‘unlimited clean energy’, showing that climate change was a hoax or that mitigation efforts were unnecessary:

In one of the social media posts, a link of a clip from Alex Jones’ InfoWars is included. In it, Jones mentions that the news indicated the arrival of ‘free energy’. He adds, without substantiation, that the US ‘deep state’ was forced into making its announcement due to impending announcements from Japan, China and Russia.

We investigated whether it was true that this news indicated that nuclear fusion had been ‘solved’ and created a new unlimited, free and clean energy source.

An Explosive Development

The achievement of nuclear ignition does appear to be a major milestone for the scientific community as it validates the scientific principles behind the experiments and indicates that the only barriers to advancement are technological and economic in nature.

It is also true that nuclear fusion represents a potential source of clean (carbon-free) and safe energy. The fusion process produces helium, a non-reactive gas, while nuclear fission produces highly radioactive plutonium. Nuclear fusion does produce some radiation, but this radioactivity is weaker than the waste from nuclear fission and lasts only about 100 years compared to 40,000 years for the latter.

Furthermore, the risk of accidents similar to those at Fukushima or Chernobyl is unlikely in fusion reactors, as the reaction can be stopped and the effects confined in case of any instability. In fission reactors, the radioactive nuclear core would still need to be cooled if the reactor were to shut down.

These positives are recognised by the US Department of Energy Secretary Jennifer Granholm, who said in a statement that ‘this milestone moves us one significant step closer to the possibility of zero-carbon, abundant fusion energy powering our society’.

The Core of the Issue

As with most scientific experiments, a major concern is the ability to reproduce the results and then replicate it at scale commercially. While nuclear fusion displays promise as a source of energy in the lab, the process requires significant improvements in technological advancement and cost efficiency before it becomes commercially viable.

One associated cost is that of nuclear fusion plants and fuel. The hydrogen isotope tritium that is required for fusion exists naturally in the atmosphere, but it is so sparsely available that much of it is commercially produced, and a gram of it costs about US$30,000. While some scientists express confidence that tritium could be produced more readily in large quantities in the future, others express scepticism, and there is a risk that a dwindling tritium supply limits the contribution of fusion to our future energy needs.

Nuclear fusion would also have to show that it can work at much larger scales. The amount of energy produced in the experiment was three megajoules from about two megajoules input energy from lasers—enough only to boil 10 kettles of water—a far smaller scale than that required for electrical grids. The most energy scientists have ever produced in a single fusion experiment is 59 megajoules over five seconds.

Commercially viable fusion plants would also require vast improvements in laser technology. While the energy efficiency of the lasers was not taken into account in the experiment, they consumed about 300 megajoules—about a hundred times the total energy output from the fusion reaction.

A 30-Year Wait?

Previous experiments that claimed to have achieved fusion in 1958 and 1989 turned out to be erroneous observations, but these generated headlines all the same, with the London Daily Sketch proclaiming ‘a Sun of our own’ and the Daily Mail hailing the arrival of ‘unlimited fuel for millions of years’.

While the latest development appears to possess much greater scientific rigour, it must be noted that headlines rarely reflect the degree of uncertainty that remains over the timeline for nuclear fusion to become viable. Scientists note that it took 70 years of effort to achieve nuclear ignition, and a long-running joke in the community is that nuclear fusion fit for use is always 30 years away.

Despite about US$5 billion in private investment in nuclear fusion and major international projects, it may therefore be decades before nuclear fusion powers cities and homes. Justin Wark, a professor of physics at the University of Oxford, said that it is ‘highly unlikely that fusion will impact on a timescale sufficiently short to impact our current climate change crisis’. He added that anyone who says fusion was the ‘great solution to the energy crisis’ was misleading.

The achievement of nuclear ignition therefore represents one step in humanity’s progress towards harnessing nuclear fusion. Nevertheless, multiple hurdles and many years remain before nuclear fusion potentially becomes a reality for everyday use, and there remains a possibility that it never becomes viable.

As such, the claim that nuclear fusion has been solved, resulting in unlimited energy and making climate change mitigation unnecessary, is false.