Many users across different social media platforms have been uploading pictures of themselves and their friends or family to ChatGPT, instructing it to recreate those pictures in the art style of Studio Ghibli movies. Studio Ghibli is a Japanese animation studio known for its detailed and distinct animation style, and this trend has spread rapidly across the internet in the past week.

However, some posts have claimed that this is actually a ruse for Open AI, which owns ChatGPT, to collect “biometric data” (which refers to unique human characteristics that can be used as individual identifiers) from the images being uploaded. Some further claim that this biometric data includes fingerprints and retina scans, which can be stored, sold, and possibly used for nefarious purposes in the future – such as bypassing biometric security systems.

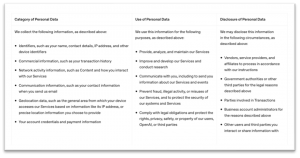

OpenAI’s Privacy Policy

It is true that by using ChatGPT (and agreeing to their terms and conditions), users consent to the collection of some personal data. Per OpenAI’s privacy policy, this includes data such as IP addresses, usage data, geolocation data, user information (such as email addresses or names), and “User Content,” which includes all “input” entered into ChatGPT – from files, to images, and audio. This does include the images being uploaded as part of the Ghibli trend. According to OpenAI, some of this data is used to train and develop its services. And, personal data collected can be disclosed to third parties (such as government authorities) in certain circumstances – such as for legal reasons or to prevent illegal activity.

According to OpenAI, some of this data is used to train and develop its services. And, personal data collected can be disclosed to third parties (such as government authorities) in certain circumstances – such as for legal reasons or to prevent illegal activity.

However, we could find no mention of specific biometric data collection – nor does this appear to be an explicitly defined category of data being collected through ChatGPT – although this in itself does not prove that such data collection is not taking place. We then looked further into how retina scans and fingerprints are commonly collected and catalogued.

Biometric Data Collection

Based on our research, it very difficult or impossible to collect retina scans or fingerprints from a single image – particularly if the image is of low to medium resolution and not specifically taken for the purposes of biometric data collection. A look across posts from users participating in the trend shows a large number of group pictures, alongside others without visible finger pads or direct eye-contact with the camera, which further decreases the feasibility of biometric data collection.

In the case of fingerprints, extracting prints from images is “technically possible” under very specific conditions (such as high resolution, correct angles, and specific lighting). However, it is also highly unfeasible and according to experts, not something that can be easily done from user-uploaded pictures that, more often than not, do not focus directly on finger pads.

Retina scans require specific scanning machines that use infrared light to capture unique patterns on retina blood vessels. It is not possible to scan a retina through a single picture. The technology to capture retina scans using less complex technology does not exist yet, although some research is underway for medical diagnostic purposes. A similar ocular form of biometric data, iris recognition, requires less specialised equipment and has also been the subject of many privacy concerns. However, extracting data from an image nevertheless still requires high resolution, close proximity, and for the iris to be focused directly on the camera.

A similar ocular form of biometric data, iris recognition, requires less specialised equipment and has also been the subject of many privacy concerns. However, extracting data from an image nevertheless still requires high resolution, close proximity, and for the iris to be focused directly on the camera.

In summary, while the possibility of some images meeting the specific conditions necessary to collect such sensitive data cannot be fully excluded, the notion that users-uploaded images containing faces or fingers allows biometric data to be easily harvested is not fully accurate either. The claim posts also provide no firm evidence or compelling argument to support their statements.

We therefore give the claim that the recent AI Ghibli image trend is a ruse to collect biometric data a rating of likely false.

Fears over privacy and biometric data are not necessarily unfounded. Biometric security breaches have been known to occur, and critics have spoken out about how AI chatbots such as ChatGPT lack sufficient transparency when it comes to their data handling practices. Legislation over AI chatbots and data collection is also an ongoing concern across different governments and is being updated (with variations in different countries and jurisdictions) as technology rapidly advances.

Legislation over AI chatbots and data collection is also an ongoing concern across different governments and is being updated (with variations in different countries and jurisdictions) as technology rapidly advances.

As consumers navigating these concerns, having accurate facts is key to making informed choices. Not taking claims such as this one at face value is critical to prevent misinformation from spreading, as it can distort the discourse and prevent healthy debates or decision making. Reading privacy policies or terms and conditions is also a useful way to stay fully cognizant of what exactly we are consenting to before uploading data to chatbots or online platforms.