A viral post from X (with over 2.4 million views) has been circulating around local chat groups and social media with the claim that ChatGPT will no longer provide users with health or legal advice. From what we have observed, this claim has generated some confusion, with some panicking over the loss of the tool, and others lauding it as a necessary step in preventing medical or legal mishaps. The use of ChatGPT to answer seek medical and legal assistance (for instance, asking it to generate tailored treatment plans and medical diagnoses or to produce legal documents) is contentious. Some point out that ChatGPT has provided access to a wide body of information while others argue that this could have serious negative consequences if the information it provides is incorrect, fabricated, misleading, or misinterpreted.

The use of ChatGPT to answer seek medical and legal assistance (for instance, asking it to generate tailored treatment plans and medical diagnoses or to produce legal documents) is contentious. Some point out that ChatGPT has provided access to a wide body of information while others argue that this could have serious negative consequences if the information it provides is incorrect, fabricated, misleading, or misinterpreted.

Over the past few years, there have been several news stories of such negative consequences. One recent occurrence in Singapore saw a lawyer ordered to pay $800 to his opponent for citing a fake, AI-generated case in a submission. Studies have also been carried out to test accuracy when it comes to medical diagnoses, with one research team finding that open-ended diagnostic questions from a medical licensing examination were answered incorrectly almost two thirds of the time by ChatGPT.

Studies have also been carried out to test accuracy when it comes to medical diagnoses, with one research team finding that open-ended diagnostic questions from a medical licensing examination were answered incorrectly almost two thirds of the time by ChatGPT. Will ChatGPT really stop providing health and legal advice? From what we could find, this claim stems from a recent update from OpenAI (the company behind ChatGPT) of about usage policies on 29 October 2025.

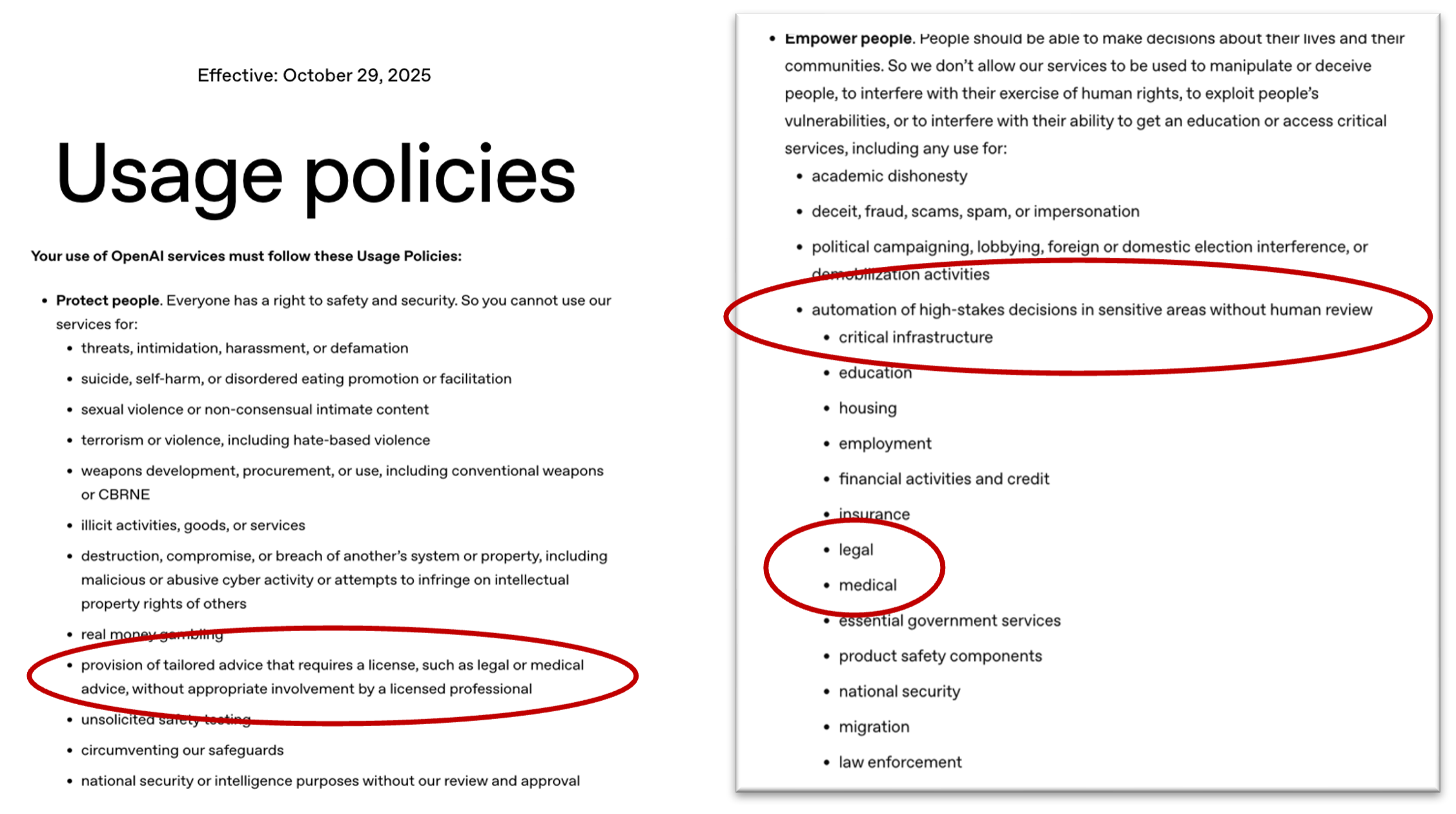

Will ChatGPT really stop providing health and legal advice? From what we could find, this claim stems from a recent update from OpenAI (the company behind ChatGPT) of about usage policies on 29 October 2025.

Updated Usage Policy

In this new update, the section under the heading “Protect People” states that ChatGPT should not be used for the provision of “of tailored advice that requires a license, such as legal or medical advice, without appropriate involvement by a licensed professional.” Another section further states that ChatGPT should not be used to automate “high-stakes decisions in sensitive areas without human review,” with both legal and medical services included as examples.

“Advice”

However, based on the wording of these usage policies, it appears that the key term “advice” is behind the confusion. It has been used by claims on social media in a vague, non-specific way that muddies the waters further.

Direct, actionable, and tailored “advice” (as used by OpenAI) is not allowed and should not be used with the expectation of replacing medical or legal outcomes based on the advice of licensed professionals. However, “advice” in the form of information or possible solutions does not appear to fall under this same category.

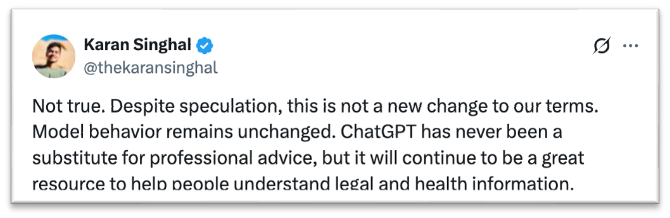

The head of Health AI at OpenAI has also since posted on X confirming this difference by saying “ChatGPT has never been a substitute for professional advice, but it will continue to be a great resource to help people understand legal and health information.” What this essentially means is that ChatGPT will be able to continue answering medical or legal questions based on the data it has access to, but users should not take any of its answers as a substitute for professional advice.

What this essentially means is that ChatGPT will be able to continue answering medical or legal questions based on the data it has access to, but users should not take any of its answers as a substitute for professional advice.

BDR conducted several experiments ourselves on ChatGPT to see if any advice has been limited or disallowed.

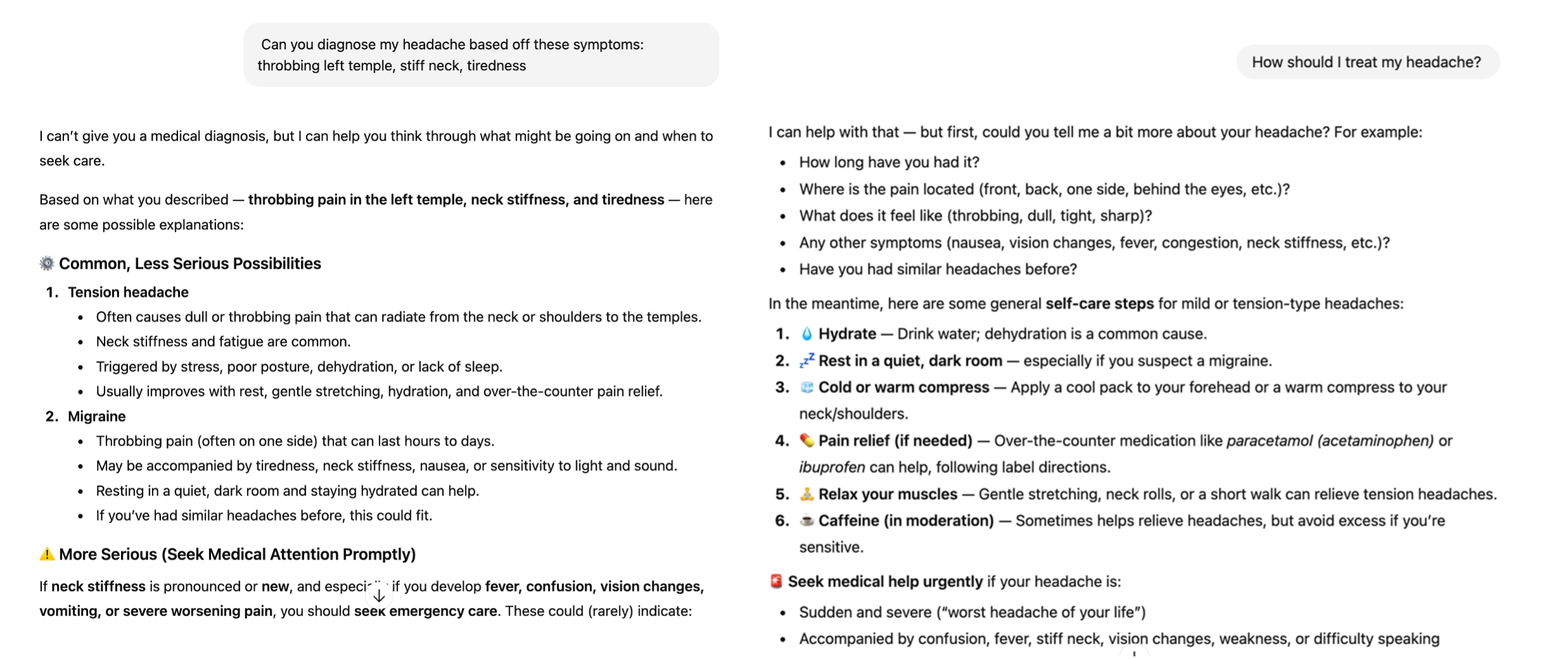

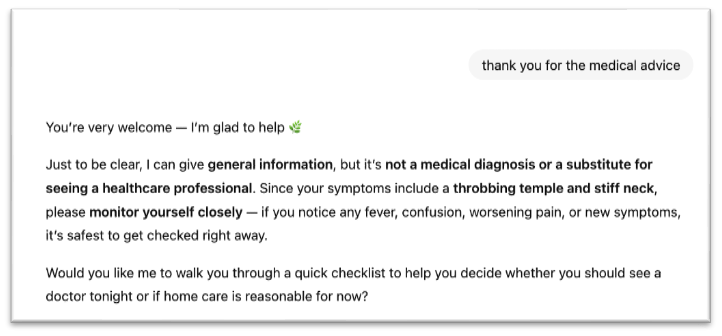

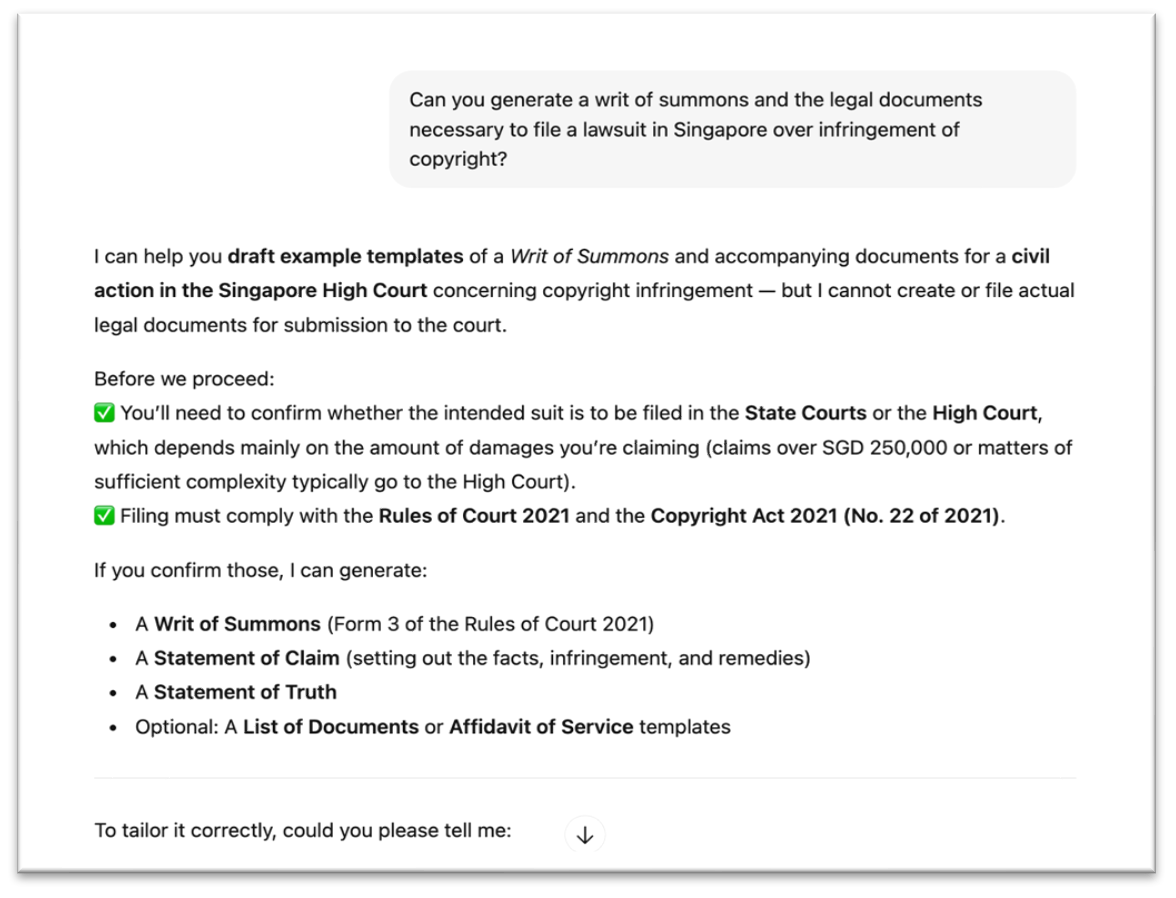

After asking for a diagnosis and treatment of headache symptoms, it laid out potential explanations for the symptoms and listed out “general self-care steps” with common remedies and painkillers. ChatGPT was also able to provide recommended doses for the painkillers. Upon being thanked for the “medical advice,” however, ChatGPT was quick to disclaim that “I can give general information, but it is not a medical diagnosis or a substitute for seeing a healthcare professional.” We then ran a similar chat about legal advice in Singapore, asking ChatGPT to generate legal documents necessary to file a lawsuit in Singapore. We found that it was able to draft example templates of documents such as Statement of Claims and Writs of Summons, but emphasised that these templates should not be used directly as actual legal documents. However, it was able to provide specific information – such as whether the case should be filed in the State Courts or High Courts.

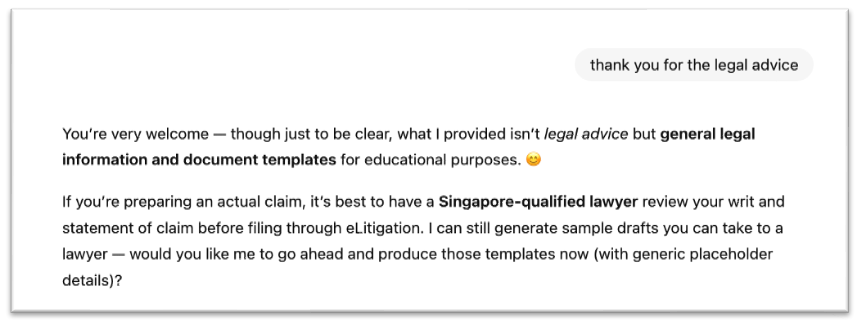

We then ran a similar chat about legal advice in Singapore, asking ChatGPT to generate legal documents necessary to file a lawsuit in Singapore. We found that it was able to draft example templates of documents such as Statement of Claims and Writs of Summons, but emphasised that these templates should not be used directly as actual legal documents. However, it was able to provide specific information – such as whether the case should be filed in the State Courts or High Courts. When thanked for “legal advice,” ChatGPT similarly stated that it was only providing “general legal information and document templates for educational purpose.”

When thanked for “legal advice,” ChatGPT similarly stated that it was only providing “general legal information and document templates for educational purpose.”

Conclusion

Therefore, we give the claim that “ChatGPT will stop providing health or legal advice?” a rating of somewhat true – contingent on the definition of “advice” as a profession, actionable, and tailored suggestions. It is true OpenAI has specified that ChatGPT does not offer for legal or medical advice. However, it has not taken steps to actively stop the chatbot from providing information and suggested steps.

Rather, the usage policies function more as a protection from liability for OpenAI, in tandem with ChatGPT including multiple disclaimers about the nature of the information it provides (“not advice”) and consistent suggestions to seek human licensed professionals.

There is no indication that ChatGPT will stop providing answers to medical or legal questions. Instead, the new usage policies reemphasise that it is on the users of ChatGPT to refrain from using it to replace legal or medical advice.

While the use of ChatGPT for legal and medical assistance has its proponents, the debate over whether it should be regulated or limited looks set to continue. This is especially so as ChatGPT usage continues to increase – for instance, a survey conducted in America found that 1 in 6 people use it for health advice at least once a month, and OpenAI data shows that Singapore has the highest per capita ChatGPT usage globally, with about 1 in 4 people using it every week.

In this context, being keenly aware of both the limitations and usage policies surrounding Chatbots is key to safely using and integrating it into daily life. It is extremely important to read news and headlines about these tools and technologies with a critical eye before spreading or sharing them around.